-

摘要:

虚拟染色技术通过深度学习实现无标记成像到荧光特异性成像的转换,能够显著降低活细胞成像的复杂性和光毒性,从而实现多通道、高通量、长时程的高分辨率成像,对生物医学研究具有重要意义。现有方法多依赖配对数据的有监督学习,为降低虚拟染色对配对数据的依赖,并进一步提升生成图像的质量,本文提出一种融合掩码自监督机制的无监督虚拟染色框架MVS-CycleGAN。该方法无需配对图像,通过引入随机掩码重建任务,遮挡输入图像的部分区域并强制网络利用语义信息进行补全,使模型能够同时捕捉目标域的全局形态和局部纹理,有效施加语义约束,从而缓解传统无监督模型在跨域转换中常见的语义漂移问题。在三类细胞数据集上的实验表明,MVS-CycleGAN整体优于传统方法:FSIM在BJ-5ta细胞膜/细胞核分别为0.784和0.565,HEK293T为0.854/0.830,Neuromast为0.657/0.740(分别提升了1.03%、9.50%、1.07%、0.85%、1.08%、5.56%)。此外,下游分割实验进一步证实了虚拟染色图像在定量分析中的有效性。研究结果表明,该方法为虚拟染色技术在多样化生物医学场景中的应用提供一种可行的解决思路。

Abstract:Virtual staining leverages deep learning to transform label-free images into fluorescence-specific images, markedly reducing the complexity and phototoxicity of live-cell imaging and enabling high-resolution, multi-channel, high-throughput, and long-term acquisition, which is of great significance for biomedical research. Existing methods mostly rely on supervised learning with paired data. To reduce the dependence of virtual staining on paired data and further improve the quality of generated images, this paper proposes an unsupervised virtual staining framework, MVS-CycleGAN, which integrates a masked self-supervised mechanism.Without requiring paired images, MVS-CycleGAN introduces a random masked reconstruction task that occludes parts of the input and forces the network to complete the missing regions using semantic context. This design allows the model to capture both global morphology and local texture in the target domain, imposing effective semantic constraints and alleviating the semantic drift commonly observed in cross-domain translation with conventional unsupervised models. Experiments on three cell datasets demonstrate that MVS-CycleGAN consistently outperforms traditional approaches: FSIM reaches 0.784/0.565 on BJ-5ta membrane/nuclei, 0.854/0.830 on HEK293T, and 0.657/0.740 on Neuromast (corresponding improvements of 1.03%, 9.50%, 1.07%, 0.85%, 1.08%, and 5.56%, respectively). In addition, downstream segmentation experiments further confirm the effectiveness of the virtually stained images for quantitative analysis. These results indicate that the proposed method provides a feasible solution for extending virtual staining to diverse biomedical scenarios.

-

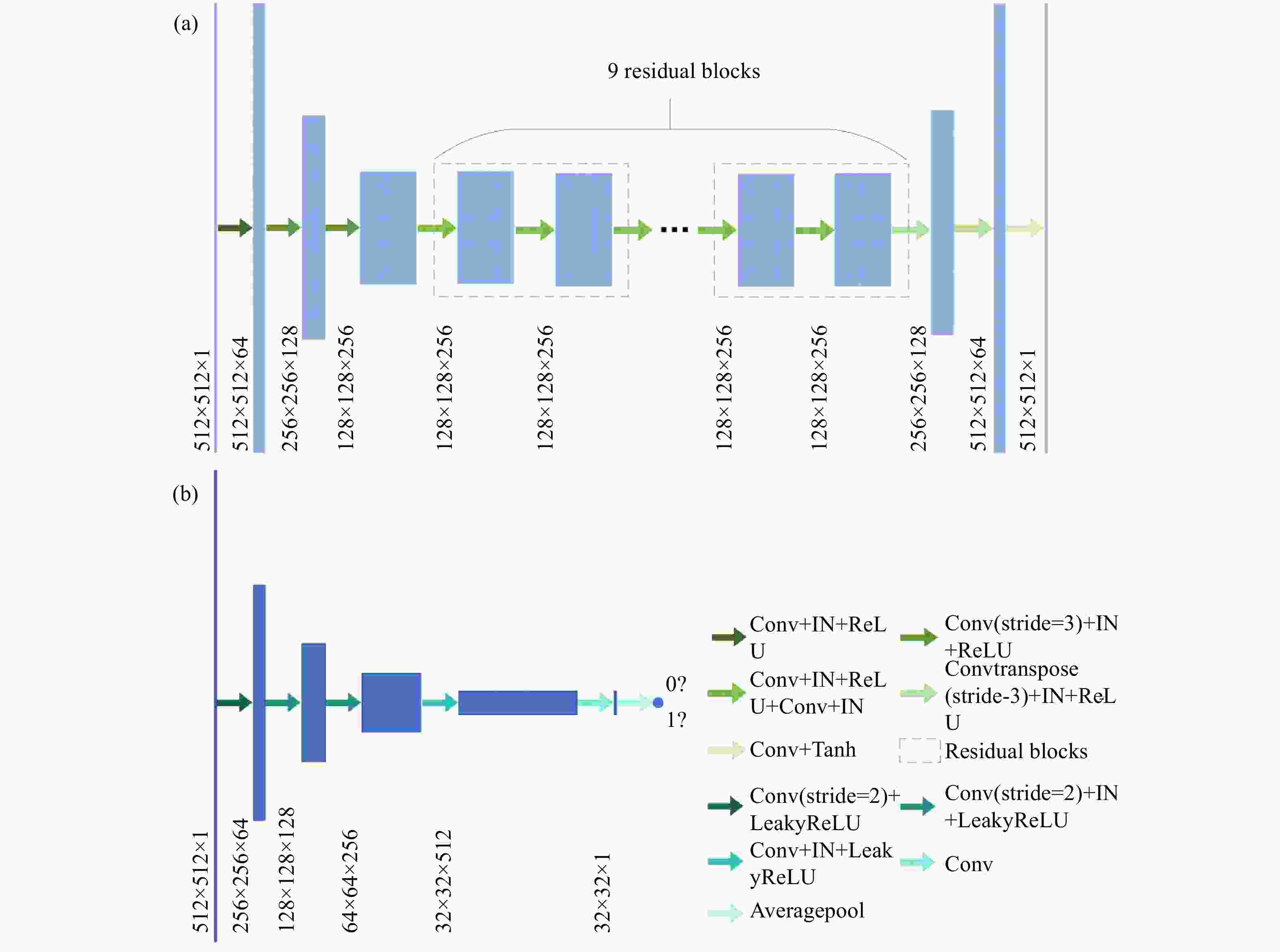

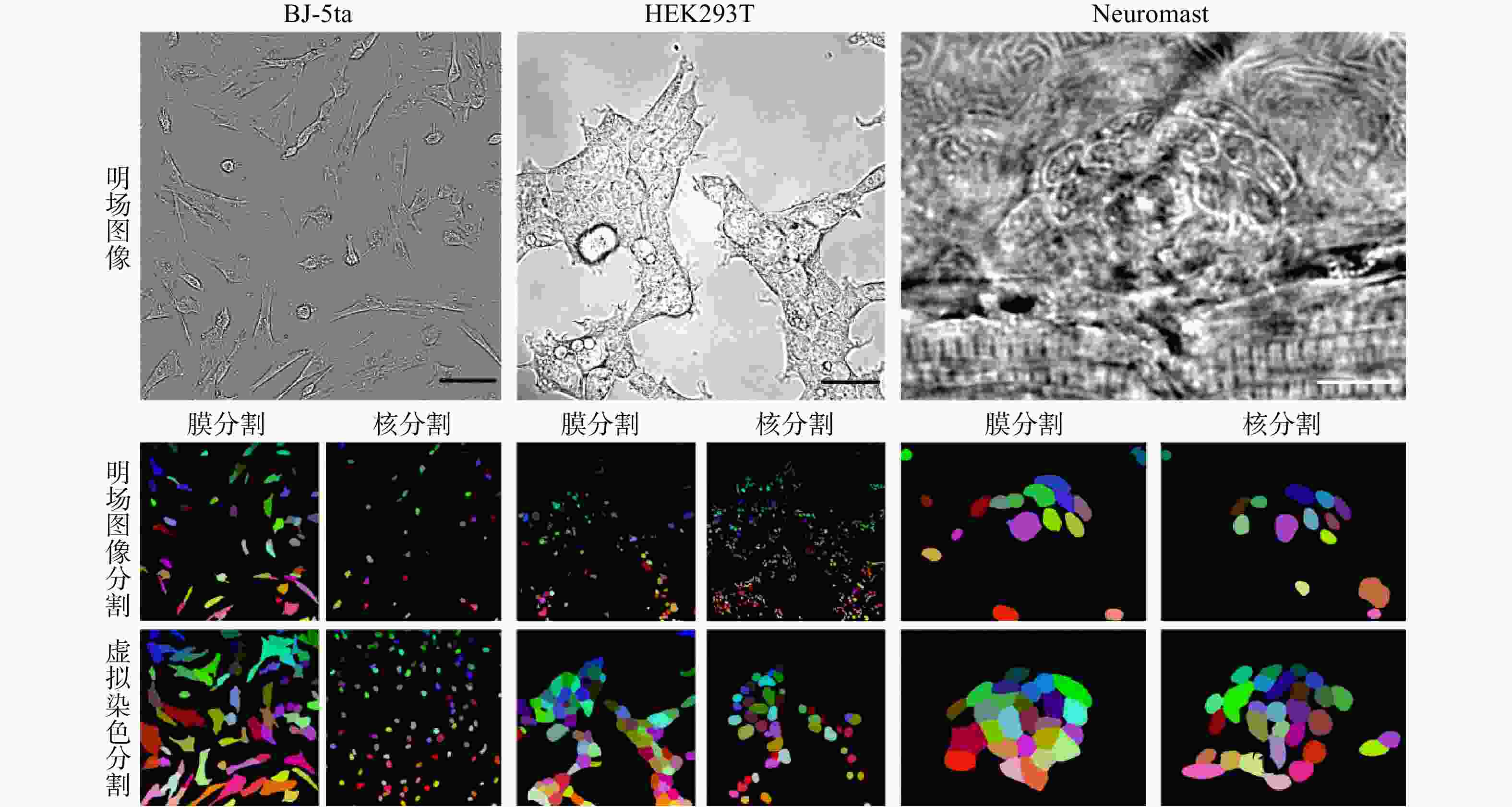

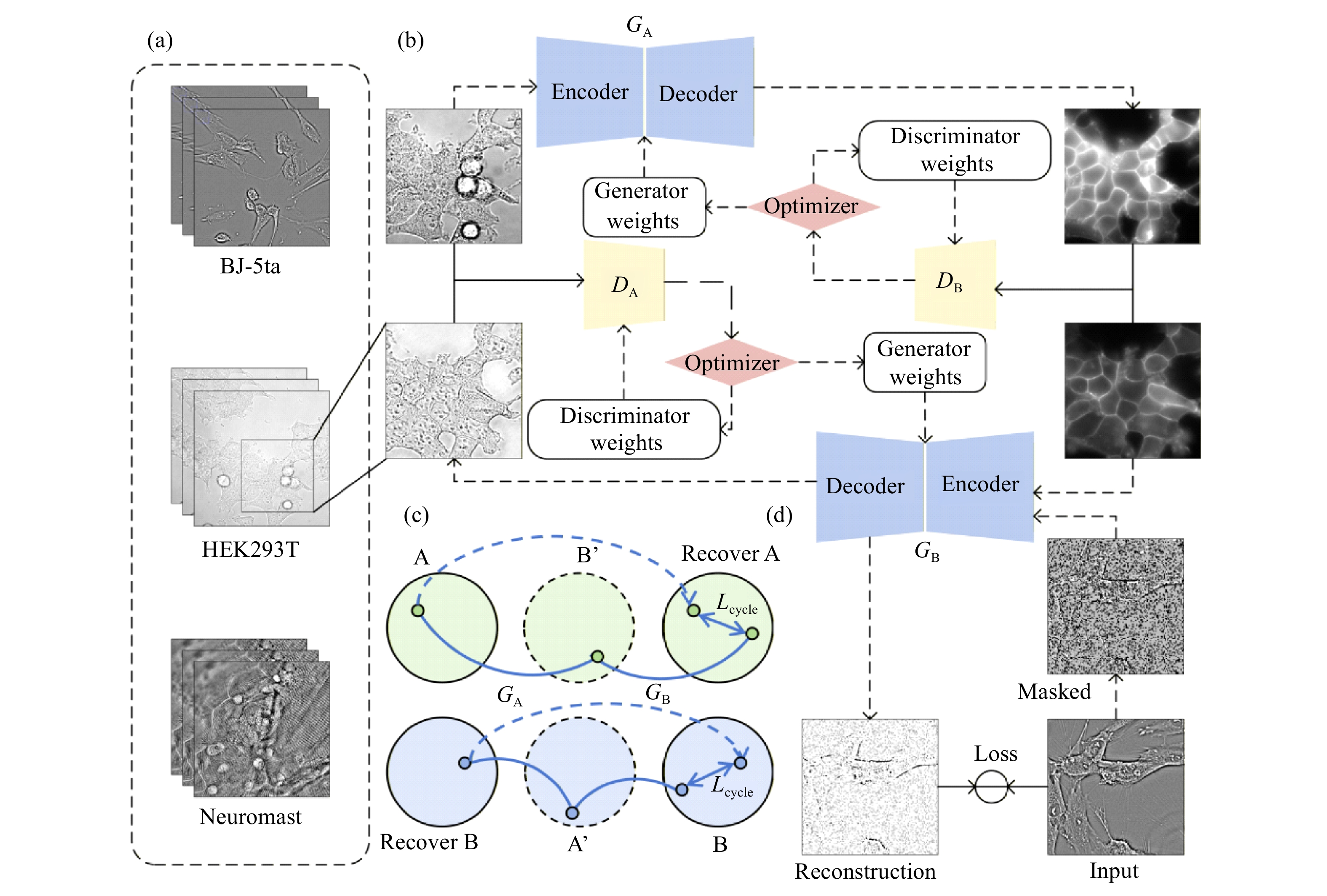

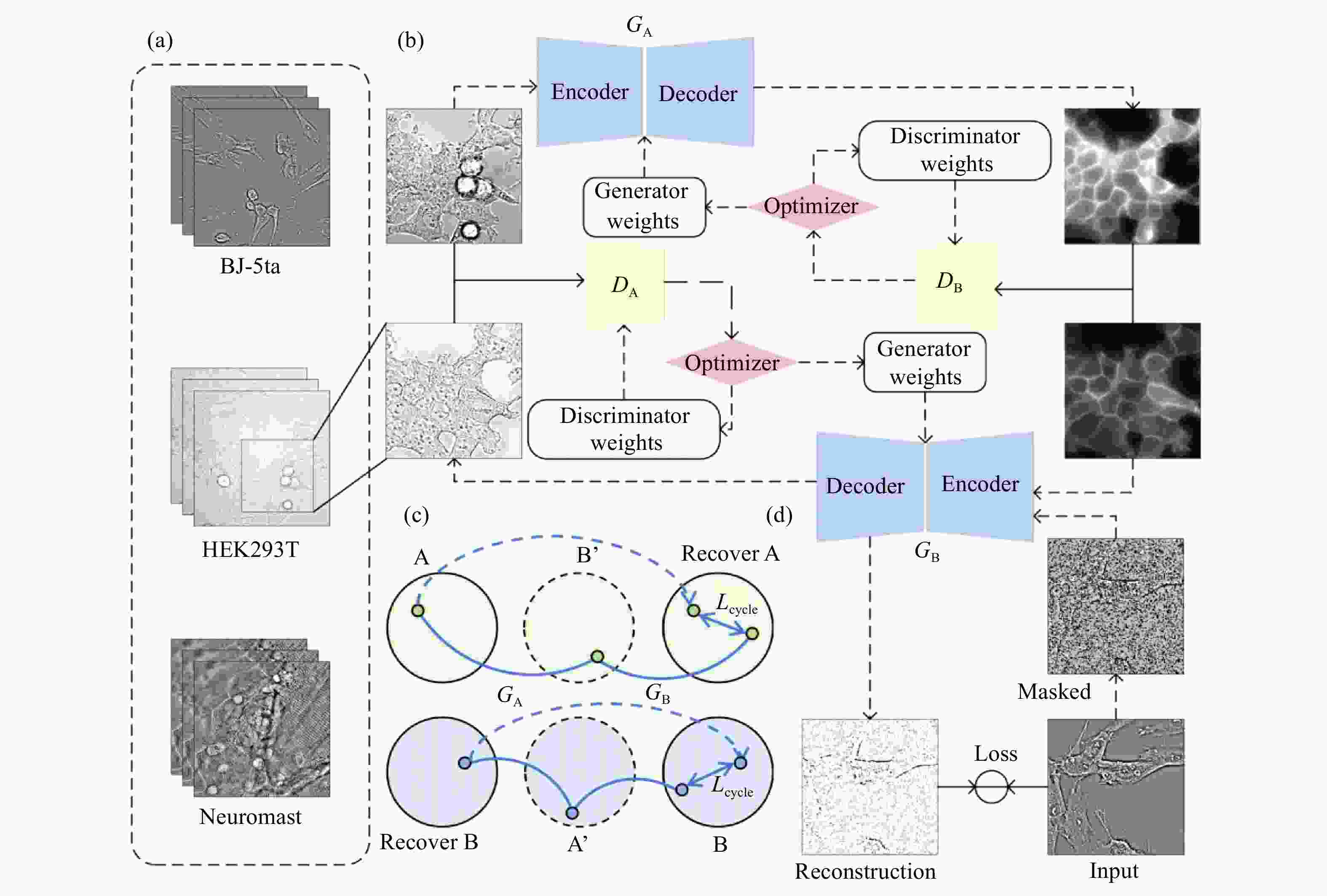

图 1 用于非配对虚拟染色任务的MVS-CycleGAN总体架构和训练框架示意图(a)不同细胞类型明场图像数据集,包括BJ-5ta人成纤维细胞、HEK293T人胚肾细胞和Neuromast斑马鱼神经丘;(b)训练流程框架示意图;(c)循环一致性约束机制;(d)掩码自监督学习模块

Figure 1. Overall architecture and training framework of the proposed MVS-CycleGAN for unpaired virtual staining(a)bright-field image datasets from different cell types, including BJ-5ta human fibroblast cells, HEK293T human embryonic kidney cells and Zebrafish Neuromast; (b) Schematic of training process framework; (c) Cycle consistency constraint mechanism; (d) masked self-supervised learning module

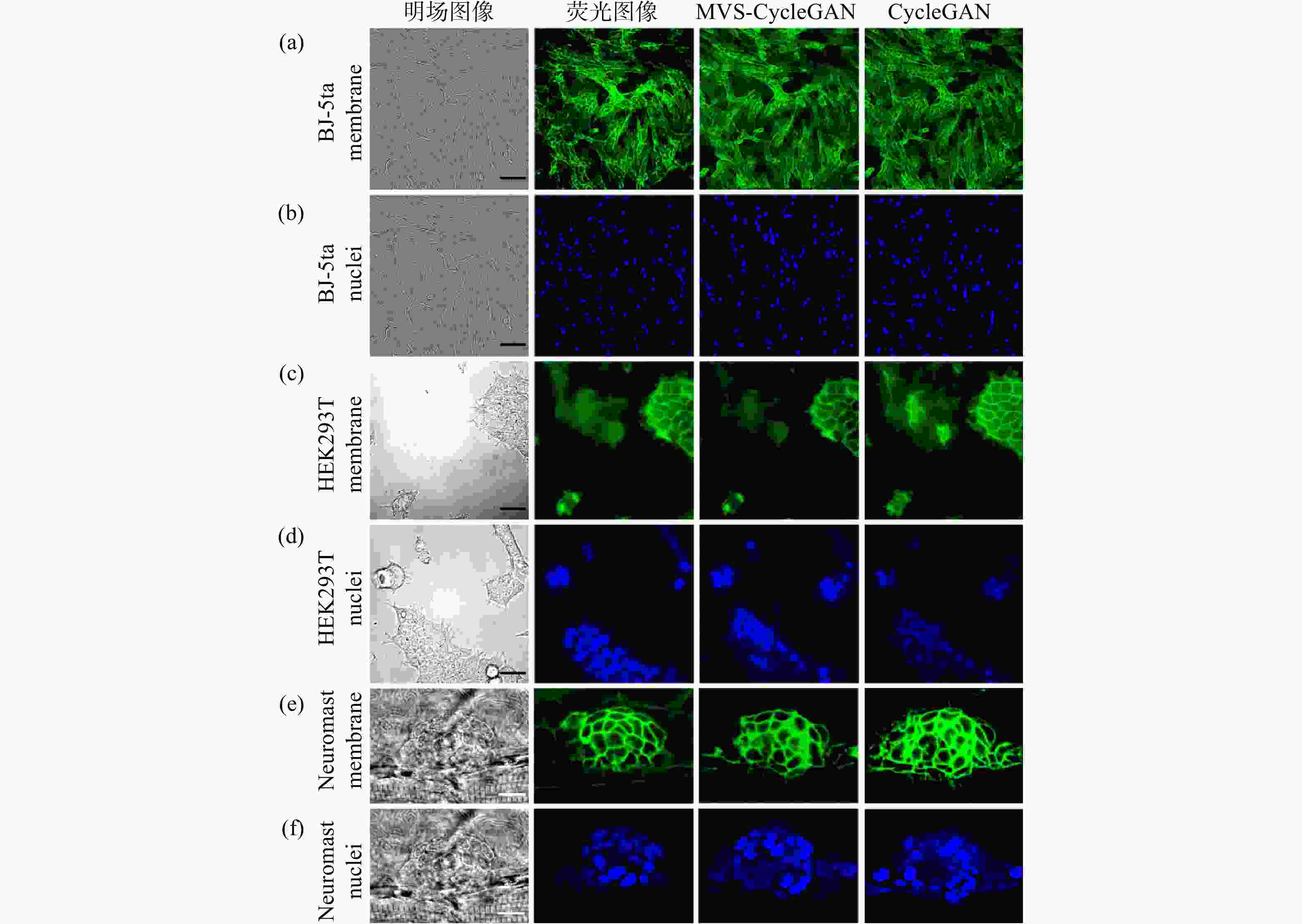

图 3 不同方法对BJ-5ta细胞、HEK293T细胞和Neuromast细胞膜与核进行虚拟染色图像。BJ-5ta细胞膜(a)和细胞核(b)明场图像、真实荧光图像及虚拟染色对比,比例尺:50 µm;HEK293T细胞膜(c)和细胞核(d)明场图像、真实荧光图像及虚拟染色对比,比例尺:50 µm;Neuromast细胞膜(e)和细胞核(f)明场图像、真实荧光图像及虚拟染色对比,比例尺:10 µm

Figure 3. Virtual staining images of cell membranes and nuclei in BJ-5ta, HEK293T and Neuromast produced by different methods. Comparisons of bright-field images, real fluorescence images, and virtual staining results are shown for BJ-5ta cell membranes (a) and nuclei (b), scale bar: 50 µm; HEK293T cell membranes (c) and nuclei (d), scale bar: 50 µm; and Neuromast cell membranes (e) and nuclei (f), scale bar: 10 µm

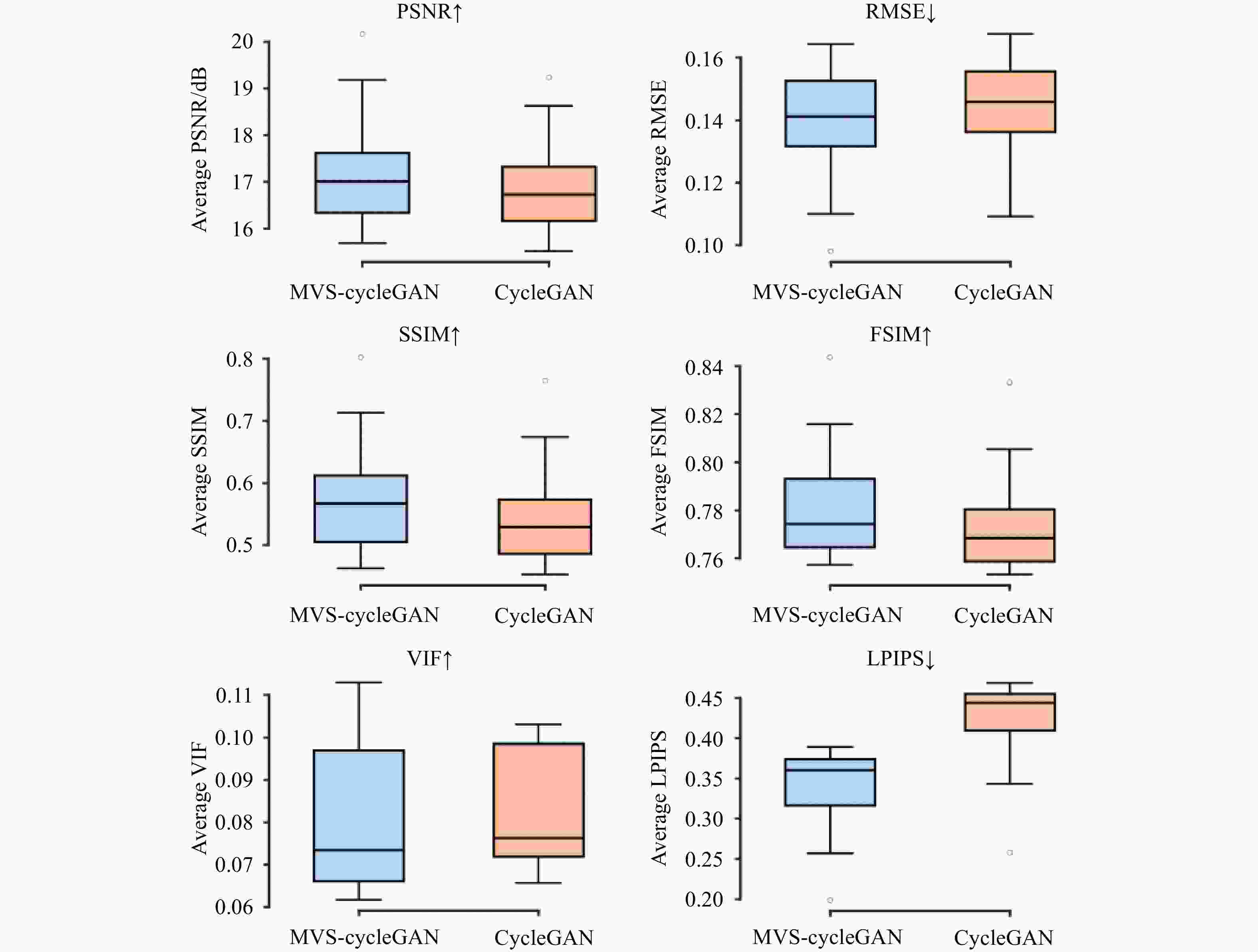

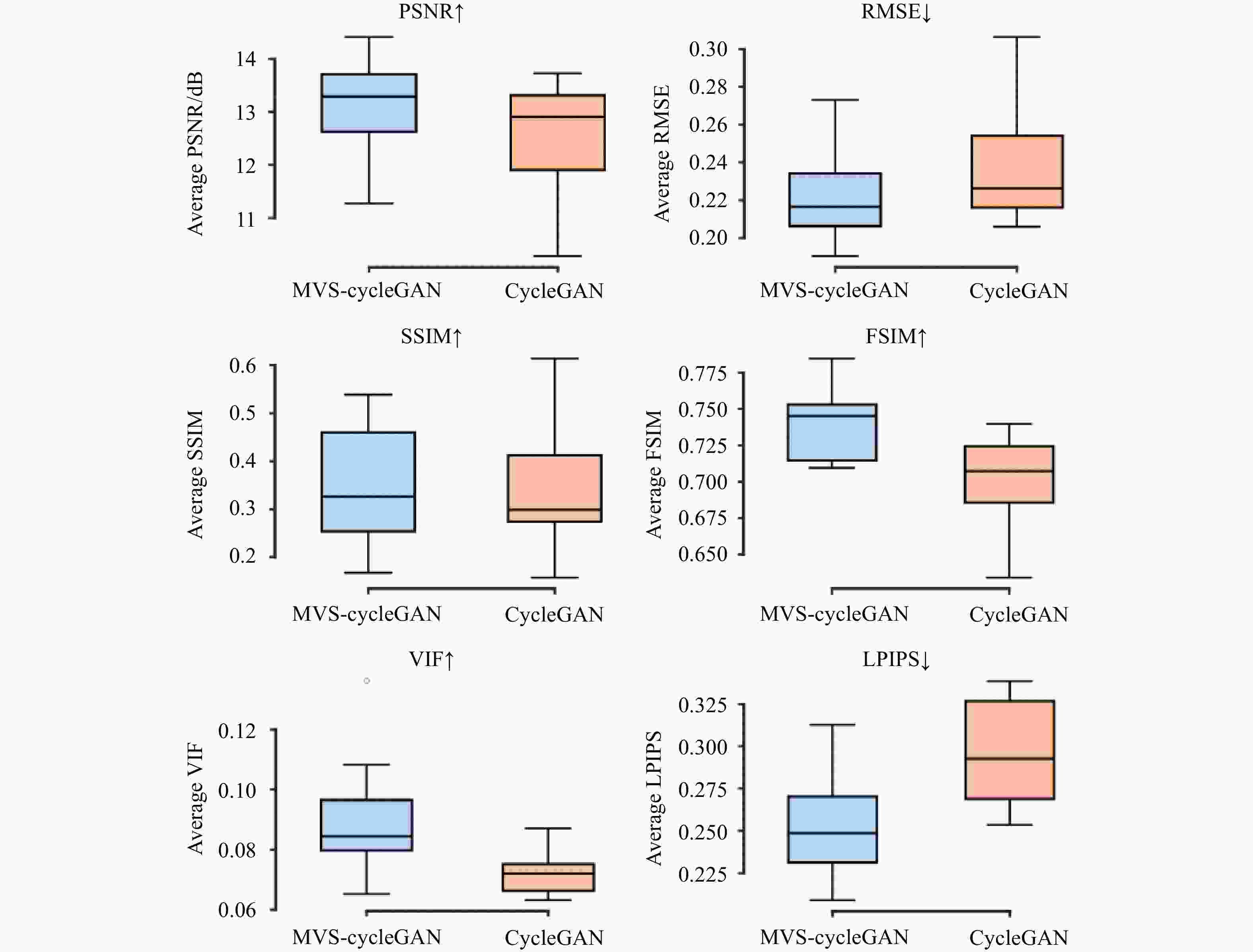

表 1 细胞及类器官虚拟染色像素误差及结构相似性指标

Table 1. Cell and organoid virtual staining pixel error and structural similarity index

Models Cell Type PSNR RMSE SSIM MVS-CycleGAN BJ-5ta

Membrane17.27 0.138 0.582 CycleGAN 16.96 0.143 0.550 MVS-CycleGAN BJ-5ta

Nuclei13.40 0.215 0.724 CycleGAN 12.96 0.226 0.703 MVS-CycleGAN HEK293T

Membrane20.72 0.094 0.555 CycleGAN 20.60 0.095 0.485 MVS-CycleGAN HEK293T

Nuclei19.80 0.106 0.763 CycleGAN 19.48 0.110 0.763 MVS-CycleGAN Neuromast

Membrane13.91 0.206 0.589 CycleGAN 13.40 0.218 0.522 MVS-CycleGAN Neuromast

Nuclei13.07 0.224 0.345 CycleGAN 12.48 0.240 0.346 表 2 细胞及类器官虚拟染色结构相似性及感知质量指标

Table 2. Cell and organoid virtual staining structural similarity and image perceived quality indicators

Models Cell Type FSIM LPIPS VIF MVS-CycleGAN BJ-5ta

Membrane0.784 0.337 0.0820 CycleGAN 0.776 0.418 0.0824 MVS-CycleGAN BJ-5ta

Nuclei0.565 0.474 0.0364 CycleGAN 0.516 0.521 0.0434 MVS-CycleGAN HEK293T

Membrane0.854 0.184 0.0827 CycleGAN 0.845 0.191 0.0797 MVS-CycleGAN HEK293T

Nuclei0.830 0.171 0.0433 CycleGAN 0.823 0.183 0.0408 MVS-CycleGAN Neuromast

Membrane0.657 0.401 0.1573 CycleGAN 0.650 0.412 0.0852 MVS-CycleGAN Neuromast

Nuclei0.740 0.253 0.0906 CycleGAN 0.701 0.296 0.0723 -

[1] 高歌, 郭晓光, 吴俊楠, 等. 用于单切片双模态光学关联成像的肾脏组织样本处理方法[J]. 中国光学(中英文), 2024, 17(5): 1227-1235.GAO G, GUO X G, WU J N, et al. Methods for processing renal tissue samples for single-slice dual-mode optical correlation imaging[J]. Chinese Optics, 2024, 17(5): 1227-1235. (in Chinese). [2] 王鹏, 周瑶, 赵宇轩, 等. 用于多尺度高分辨率三维成像的双环光片荧光显微技术[J]. 中国光学(中英文), 2022, 15(6): 1321-1331.WANG P, ZHOU Y, ZHAO Y X, et al. Double-ring-modulated light sheet fluorescence microscopic technique for multi-scale high-resolution 3D imaging[J]. Chinese Optics, 2022, 15(6): 1321-1331. (in Chinese). [3] KUMAR A, MCNALLY K E, ZHANG Y X, et al. Multispectral live-cell imaging with uncompromised spatiotemporal resolution[J]. Nature Photonics, 2025, 19(10): 1146-1156. doi: 10.1038/s41566-025-01745-7 [4] XIANG D, WANG ZH CH, ZHENG H W, et al. Organic small-molecule NIR-II fluorophores for tumor phototheranostics[J]. Light: Science & Applications, 2026, 15(1): 173. [5] CHRISTIANSEN E M, YANG S J, ANDO D M, et al. In silico labeling: predicting fluorescent labels in unlabeled images[J]. Cell, 2018, 173(3): 792-803. e19. [6] HOU Y W, WANG W Y, FU Y ZH, et al. Multi-resolution analysis enables fidelity-ensured deconvolution for fluorescence microscopy[J]. eLight, 2024, 4(1): 14. doi: 10.1186/s43593-024-00073-7 [7] SHAKED N T, BOPPART S A, WANG L V, et al. Label-free biomedical optical imaging[J]. Nature Photonics, 2023, 17(12): 1031-1041. doi: 10.1038/s41566-023-01299-6 [8] WANG Q, AKRAM A R, DORWARD D A, et al. Deep learning-based virtual H& E staining from label-free autofluorescence lifetime images[J]. npj Imaging, 2024, 2(1): 17. doi: 10.1038/s44303-024-00021-7 [9] 黄宇然, 张智敏, 董婉潔, 等. 多色虚拟荧光辐射差分显微成像[J]. 中国光学(中英文), 2022, 15(6): 1332-1338.HUANG Y R, ZHANG ZH M, DONG W J, et al. Multi-color virtual fluorescence emission difference microscopy[J]. Chinese Optics, 2022, 15(6): 1332-1338. (in Chinese). [10] COMBS C A, SHROFF H. Fluorescence microscopy: a concise guide to current imaging methods[J]. Current Protocols in Neuroscience, 2017, 79: 2.1. 1-2.1. 25. [11] SEO J, SIM Y, KIM J, et al. PICASSO allows ultra-multiplexed fluorescence imaging of spatially overlapping proteins without reference spectra measurements[J]. Nature Communications, 2022, 13(1): 2475. doi: 10.1038/s41467-022-30168-z [12] PIRONE D, BIANCO V, MICCIO L, et al. Beyond fluorescence: advances in computational label-free full specificity in 3D quantitative phase microscopy[J]. Current Opinion in Biotechnology, 2024, 85: 103054. doi: 10.1016/j.copbio.2023.103054 [13] YIN Z CH, HE B, YING Y ZH, et al. Fast and label-free 3D virtual H&E histology via active phase modulation-assisted dynamic full-field OCT[J]. npj Imaging, 2025, 3(1): 12. doi: 10.1038/s44303-025-00068-0 [14] KREISS L, JIANG SH W, LI X, et al. Digital staining in optical microscopy using deep learning - a review[J]. PhotoniX, 2023, 4(1): 34. doi: 10.1186/s43074-023-00113-4 [15] ZHANG Y J, HUANG L ZH, PILLAR N, et al. Pixel super-resolved virtual staining of label-free tissue using diffusion models[J]. Nature Communications, 2025, 16(1): 5016. doi: 10.1038/s41467-025-60387-z [16] ICHITA M, YAMAMICHI H, HIGAKI T. Virtual staining from bright-field microscopy for label-free quantitative analysis of plant cell structures[J]. Plant Molecular Biology, 2025, 115(1): 29. doi: 10.1007/s11103-025-01558-w [17] KAMATH V, BHAT V G, RAJU G, et al. Application of fluorescence lifetime imaging-integrated deep learning analysis for cancer research[J]. Light: Advanced Manufacturing, 2025, 6(3): 49. doi: 10.37188/lam.2025.049 [18] OUNKOMOL C, SESHAMANI S, MALECKAR M M, et al. Label-free prediction of three-dimensional fluorescence images from transmitted-light microscopy[J]. Nature Methods, 2018, 15(11): 917-920. doi: 10.1038/s41592-018-0111-2 [19] PARK E, MISRA S, HWANG D G, et al. Unsupervised inter-domain transformation for virtually stained high-resolution mid-infrared photoacoustic microscopy using explainable deep learning[J]. Nature Communications, 2024, 15(1): 10892. doi: 10.1038/s41467-024-55262-2 [20] DAI W X, WONG I H M, WONG T T W. Exceeding the limit for microscopic image translation with a deep learning-based unified framework[J]. PNAS Nexus, 2024, 3(4): 133. doi: 10.1093/pnasnexus/pgae133 [21] MA J B, LI W Q, LI J B, et al. Generative AI for misalignment-resistant virtual staining to accelerate histopathology workflows[J]. arXiv: 2509.14119, 2025. (查阅网上资料, 请核对文献类型及格式是否正确). [22] ZHU J Y, PARK T, ISOLA P, et al. Unpaired image-to-image translation using cycle-consistent adversarial networks[C]. Proceedings of the IEEE International Conference on Computer Vision, IEEE, 2017: 2242-2251. [23] LI X Y, ZHANG G X, QIAO H, et al. Unsupervised content-preserving transformation for optical microscopy[J]. Light: Science & Applications, 2021, 10(1): 44. [24] LIU Z W, HIRATA-MIYASAKI E, PRADEEP S, et al. Robust virtual staining of landmark organelles with Cytoland[J]. Nature Machine Intelligence, 2025, 7(6): 901-915. doi: 10.1038/s42256-025-01046-2 [25] HE K M, CHEN X L, XIE S N, et al. Masked autoencoders are scalable vision learners[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, 2022: 15979-15988. [26] PANG SH Y, XIANG J W, ZUO ZH Q, et al. Contrastive masked feature modeling for self-supervised representation learning of high-resolution remote sensing images[J]. Remote Sensing, 2026, 18(4): 626. doi: 10.3390/rs18040626 [27] WANG ZH, BOVIK A C, SHEIKH H R, et al. Image quality assessment: from error visibility to structural similarity[J]. IEEE Transactions on Image Processing, 2004, 13(4): 600-612. doi: 10.1109/TIP.2003.819861 [28] ZHANG L, ZHANG L, MOU X Q, et al. FSIM: a feature similarity index for image quality assessment[J]. IEEE Transactions on Image Processing, 2011, 20(8): 2378-2386. doi: 10.1109/TIP.2011.2109730 [29] ZHANG R, ISOLA P, EFROS A A, et al. The unreasonable effectiveness of deep features as a perceptual metric[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, 2018: 586-595. [30] SHEIKH H R, BOVIK A C, DE VECIANA G. An information fidelity criterion for image quality assessment using natural scene statistics[J]. IEEE Transactions on Image Processing, 2005, 14(12): 2117-2128. doi: 10.1109/TIP.2005.859389 -

下载:

下载: